@Zachary-Lowell-0 I am not sure how I resolved this, but as far as I remember there was px4_msgs versioning issue where I wasn't using either the correct ones or something

Latest posts made by Darshit Desai

-

RE: Unable to see rostopics published by mpatoros2 foxy in my humble docker containerposted in Ask your questions right here!

-

Version control the Linux distro, ideasposted in VOXL SDK

Hello Modal AI team,

I have a general question on linux distro. How does the version control of a Linux distro happen? I know this is a simple Google search, but from what I have observed in voxl upgrade tarballs there are two folder voxl-suite and system-image, I was under the impression that to make a system image a person can just use the dd command and make a backup.img file but modal AI seems to be using something more complicated than that. This is coming from my very limited knowledge of making backup system images of raspberry pi 3.

I have a bunch of drones and I am working on drone swarms and its always annoying to install the deb files one by one and revving up the versions, right now there hasn't been any changes in the rootfs or the core root drivers and stuff but I wanted to know if there is an architecture that I should follow just for my knowledge. I also have to change a number of bash files manually right now to make sure the dds servers start as I want in a similar fashion on all drones which is pretty annoying.

Any tooling that you use like dockers, ci tools, cross compile etc

-

RE: Utilizing all CPUs on Voxl2posted in Ask your questions right here!

@Mrunal-Sarvaiya voxl set cpu mode performance and than try running your experiment. By default some cpu cores are slower than the others even in perf mode

-

Unable to subscribe to vehicle_command_position topicposted in Ask your questions right here!

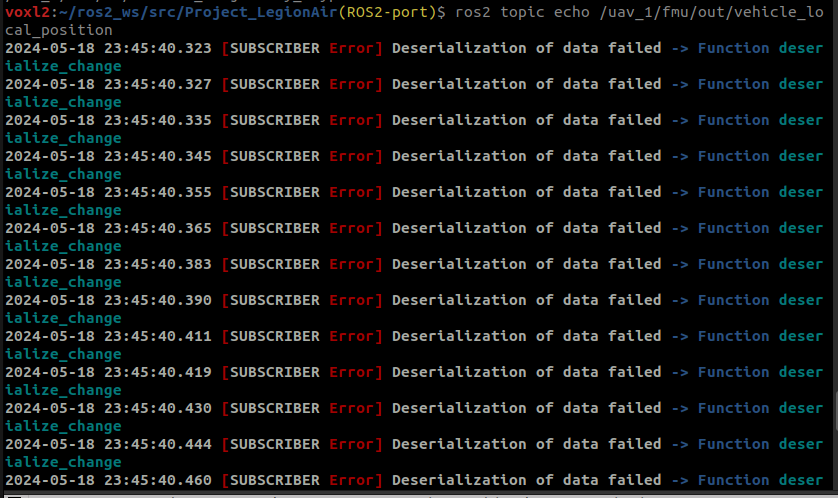

Hi @Moderator I have been trying to subscribe to vehicle_local_position ROS2 PX4 topic in my code but that wasn't working so I checked by echoing the topic directly from the terminal and it shows this error?

After doing some search online, I found that the PX4 and px4_msgs version might be different, since I am using voxl_ros2_foxy any ideas why this would be happening?

Voxl version info

voxl2:~/ros2_ws/src/Project_LegionAir(ROS2-port)$ voxl-version -------------------------------------------------------------------------------- system-image: 1.7.1-M0054-14.1a-perf-nightly-20231025 kernel: #1 SMP PREEMPT Thu Oct 26 03:25:38 UTC 2023 4.19.125 -------------------------------------------------------------------------------- hw version: M0054 -------------------------------------------------------------------------------- voxl-suite: 1.1.2 -------------------------------------------------------------------------------- Packages: Repo: http://voxl-packages.modalai.com/ ./dists/qrb5165/sdk-1.1/binary-arm64/ Last Updated: 2024-05-07 06:01:21 List: libmodal-cv 0.4.0 libmodal-exposure 0.1.0 libmodal-journal 0.2.2 libmodal-json 0.4.3 libmodal-pipe 2.9.2 libqrb5165-io 0.4.2 libvoxl-cci-direct 0.2.1 libvoxl-cutils 0.1.1 mv-voxl 0.1-r0 qrb5165-bind 0.1-r0 qrb5165-dfs-server 0.2.0 qrb5165-imu-server 1.0.1 qrb5165-rangefinder-server 0.1.1 qrb5165-slpi-test-sig 01-r0 qrb5165-system-tweaks 0.2.3 qrb5165-tflite 2.8.0-2 voxl-bind-spektrum 0.1.0 voxl-camera-calibration 0.5.3 voxl-camera-server 1.8.9.1 voxl-configurator 0.4.8 voxl-cpu-monitor 0.4.7 voxl-docker-support 1.3.0 voxl-elrs 0.1.3 voxl-esc 1.3.7 voxl-feature-tracker 0.3.2 voxl-flow-server 0.3.3 voxl-gphoto2-server 0.0.10 voxl-jpeg-turbo 2.1.3-5 voxl-lepton-server 1.2.0 voxl-libgphoto2 0.0.4 voxl-libuvc 1.0.7 voxl-logger 0.3.5 voxl-mavcam-manager 0.5.3 voxl-mavlink 0.1.1 voxl-mavlink-server 1.3.2 voxl-microdds-agent 2.4.1-0 voxl-modem 1.0.8 voxl-mongoose 7.7.0-1 voxl-mpa-to-ros 0.3.7 voxl-mpa-to-ros2 0.0.2 voxl-mpa-tools 1.1.3 voxl-neopixel-manager 0.0.3 voxl-opencv 4.5.5-2 voxl-portal 0.6.3 voxl-px4 1.14.0-2.0.63 voxl-px4-imu-server 0.1.2 voxl-px4-params 0.3.3 voxl-qvio-server 1.0.0 voxl-remote-id 0.0.9 voxl-ros2-foxy 0.0.1 voxl-streamer 0.7.4 voxl-suite 1.1.2 voxl-tag-detector 0.0.4 voxl-tflite-server 0.3.2 voxl-utils 1.3.3 voxl-uvc-server 0.1.6 voxl-vision-hub 1.7.3 voxl2-system-image 1.7.1-r0 voxl2-wlan 1.0-r0 -------------------------------------------------------------------------------- -

RE: Something wrong with my Starlingposted in Ask your questions right here!

@Moderator The structural integrity of the sensor mounts are fine, I checked. I am attaching a few snapshots of inspect-qvio and inspect-imu they also read ok

Drive video of inspect-imu: https://drive.google.com/file/d/1pncppR93pNUVlOQUq3WYwkAaN5uJArgJ/view?usp=sharing

-

Something wrong with my Starlingposted in Ask your questions right here!

Hi @Moderator my starling seems to be a bit jittery in position mode, note I had to kill the drone once and it fell from a height of 50 cms and since than it has been like that, What could be the issue here?

Here's a video of that for your reference

https://drive.google.com/file/d/1IRxTXNa_IzSQwImsHnB2Iz0KVi9nhrQ8/view?usp=drivesdk

-

RE: VOXL2 microdds agent namespaceposted in Ask your questions right here!

@Eric-Katzfey I know this is a question for the px4 forum, but can you guys help me figure out how to run the https://github.com/PX4/px4_ros_com/blob/main/src/examples/offboard/offboard_control_srv.cpp service server here. I changed the namespace for my microdds client as you know and I am not sure if that service server is running or not, I can't find the server when I do

ros2 service listorros2 service find -

RE: VOXL2 microdds agent namespaceposted in Ask your questions right here!

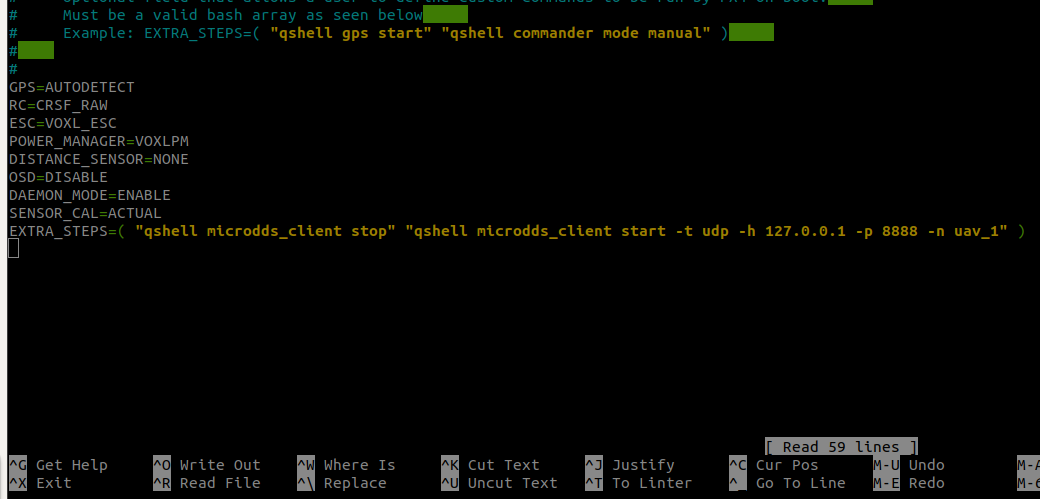

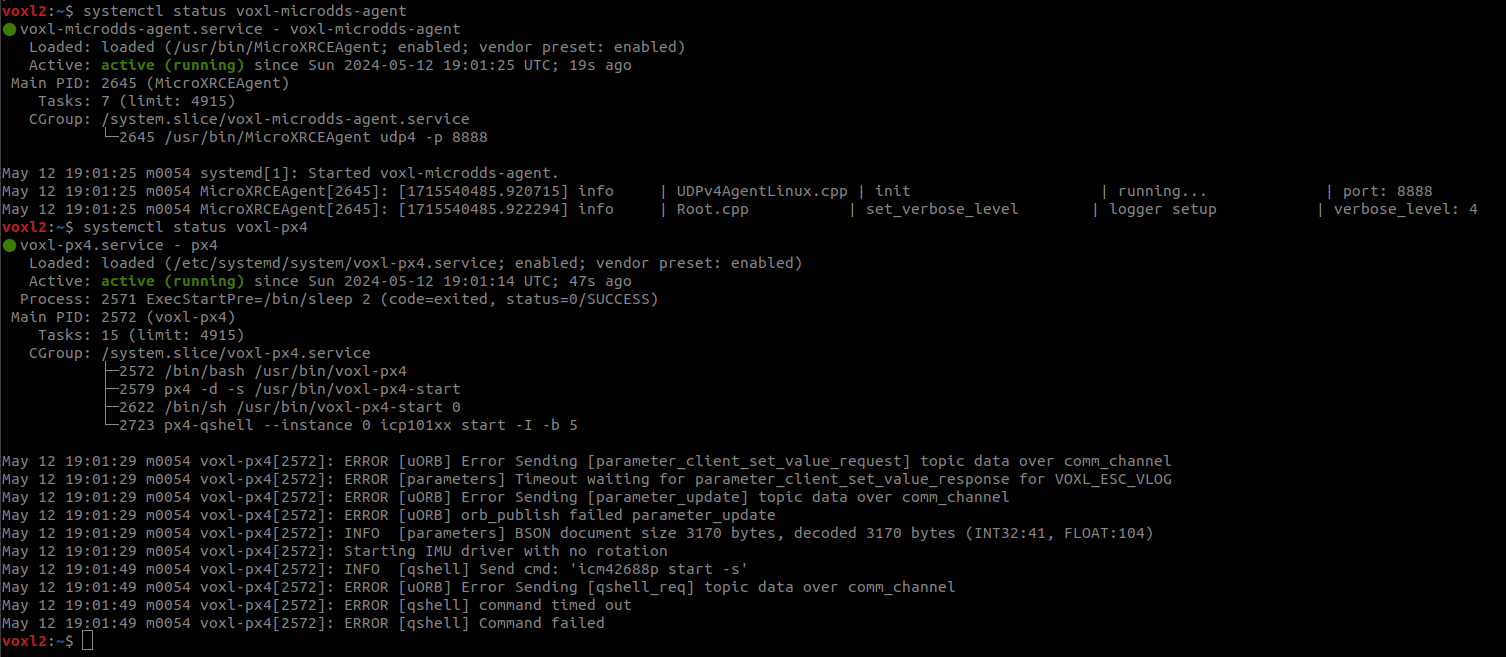

Hi @Eric-Katzfey that worked, now I actually want to export a variable which states the namespace of the relevant drone and the voxl-px4-start file picks up that variable and assigns the namespace but I am facing some errors because of that, could you have a look

Here's my bashrc file

#!/bin/bash # ModalAI default bashrc file #VERSION 1.2 # If not running interactively, don't do anything case $- in *i*) ;; *) return;; esac # don't put duplicate lines or lines starting with space in the history. # See bash(1) for more options HISTCONTROL=ignoreboth # append to the history file, don't overwrite it shopt -s histappend # for setting history length see HISTSIZE and HISTFILESIZE in bash(1) HISTSIZE=1000 HISTFILESIZE=2000 # check the window size after each command and, if necessary, # update the values of LINES and COLUMNS. shopt -s checkwinsize # ubuntu ssh sets TERM to xterm-256color which screws with ROS # change it to linux spec export TERM=linux if [ -d /home/root/.profile.d/ ]; then for i in /home/root/.profile.d/* ; do if [ -d $i ]; then for j in $i/* ; do if [ -f $j ]; then . $j fi done else . $i fi done fi # Put user-specific tweaks here or in a file in ~/.profile.d/ export PX4_NAMESPACE="uav_1"Here's my voxl-px4-start file

#!/bin/sh # PX4 commands need the 'px4-' prefix in bash. # (px4-alias.sh is expected to be in the PATH) . px4-alias.sh echo -e "\n*************************" echo "GPS: $GPS" echo "RC: $RC" echo "ESC: $ESC" echo "POWER MANAGER: $POWER_MANAGER" echo "DISTANCE SENSOR: $DISTANCE_SENSOR" echo "OSD: $OSD" echo "EXTRA STEPS:" for i in "${EXTRA_STEPS[@]}" do echo -e "\t$i" done echo -e "*************************\n" # In order to just exit after starting the uorb / muorb modules define # the environment variable MINIMAL_PX4. (e.g. export MINIMAL_PX4=1) # This is useful for testing / debug where you may want to start drivers # and modules manually from the px4 command shell if [ ! -z $MINIMAL_PX4 ]; then /bin/echo "Running minimal script" exit 0 fi # Figure out what platform we are running on. PLATFORM=`/usr/bin/voxl-platform 2> /dev/null` RETURNCODE=$? if [ $RETURNCODE -ne 0 ]; then # If we couldn't get the platform from the voxl-platform utility then check # /etc/version to see if there is an M0052 substring in the version string. If so, # then we assume that we are on M0052. VERSIONSTRING=$(</etc/version) M0052SUBSTRING="M0052" if [[ "$VERSIONSTRING" == *"$M0052SUBSTRING"* ]]; then PLATFORM="M0052" fi fi # We can only run on M0052, M0054, or M0104 so exit with error if that is not the case if [ $PLATFORM = "M0052" ]; then /bin/echo "Running on M0052" elif [ $PLATFORM = "M0054" ]; then /bin/echo "Running on M0054" elif [ $PLATFORM = "M0104" ]; then /bin/echo "Running on M0104" else /bin/echo "Error, cannot determine platform!" exit 0 fi # Sleep a little here. A lot happens when the uorb and muorb start # and we need to make sure that it all completes successfully to avoid # any possible race conditions. /bin/sleep 1 param select /data/px4/param/parameters # Load in all of the parameters that have been saved in the file param load # Start logging and use timestamps for log files when possible. # Add the "-e" option to start logging immediately. Default is # to log only when armed. Caution must be used with the "-e" option # because if power is removed without stopping the logger gracefully then # the log file may be corrupted. logger start -t # IMU (accelerometer / gyroscope) if [ "$PLATFORM" == "M0104" ]; then /bin/echo "Starting IMU driver with rotation 12" qshell icm42688p start -s -R 12 else /bin/echo "Starting IMU driver with no rotation" qshell icm42688p start -s fi # Start Invensense ICP 101xx barometer built on to VOXL 2 qshell icp101xx start -I -b 5 # Auto detect the magnetometer. If one or both of these devices # are not connected it will fail but not cause any harm. /bin/echo "Looking for qmc5883l magnetometer" qshell qmc5883l start -R 10 -X -b 1 /bin/echo "Looking for ist8310 magnetometer" qshell ist8310 start -R 10 -X -b 1 # GPS and magnetometer if [ "$GPS" != "NONE" ]; then # On M0052 the GPS driver runs on the apps processor if [ $PLATFORM = "M0052" ]; then gps start -d /dev/ttyHS2 # On M0054 and M0104 the GPS driver runs on SLPI DSP else qshell gps start fi fi # Auto detect an ncp5623c i2c RGB LED. If one isn't connected this will # fail but not cause any harm. /bin/echo "Looking for ncp5623c RGB LED" qshell rgbled_ncp5623c start -X -b 1 -f 400 -a 56 # We do not change the value of SYS_AUTOCONFIG but if it does not # show up as used then it is not reported to QGC and we get a # missing parameter error. param touch SYS_AUTOCONFIG # ESC driver if [ "$ESC" == "VOXL_ESC" ]; then /bin/echo "Starting VOXL ESC driver" qshell voxl_esc start elif [ "$ESC" == "VOXL2_IO_PWM_ESC" ]; then if [ "$RC" == "M0065_SBUS" ]; then /bin/echo "Starting VOXL IO for PWM ESC with SBUS RC" qshell voxl2_io start else /bin/echo "Starting VOXL IO for PWM ESC without SBUS RC" qshell voxl2_io start -e fi else /bin/echo "No ESC type specified, not starting an ESC driver" fi # RC driver if [ "$RC" == "FAKE_RC_INPUT" ]; then /bin/echo "Starting fake RC driver" qshell rc_controller start elif [ "$RC" == "CRSF_RAW" ]; then /bin/echo "Starting CRSF RC driver" qshell crsf_rc start -d 7 elif [ "$RC" == "CRSF_MAV" ]; then /bin/echo "Starting TBS crossfire RC - MAV Mode" qshell mavlink_rc_in start -m -p 7 -b 115200 elif [ "$RC" == "SPEKTRUM" ]; then /bin/echo "Starting Spektrum RC" # On M0052 the RC driver runs on the apps processor if [ $PLATFORM = "M0052" ]; then rc_input start -d /dev/ttyHS1 # On M0054 and M0104 the RC driver runs on SLPI DSP else qshell spektrum_rc start fi elif [ "$RC" == "GHST" ]; then /bin/echo "Starting GHST RC driver" qshell ghst_rc start -d 7 elif [ "$RC" == "M0065_SBUS" ]; then if [ $PLATFORM = "M0052" ]; then apps_sbus start elif [ "$ESC" != "VOXL2_IO_PWM_ESC" ]; then /bin/echo "Attempting to start M0065 SBUS RC driver for original M0065 FW" qshell dsp_sbus start retVal=$? if [ $retVal -ne 0 ]; then /bin/echo "Starting M0065 SBUS RC driver for original M0065 FW failed" /bin/echo "Attempting to start M0065 SBUS RC driver for new M0065 FW" qshell voxl2_io start -d -p 7 fi else /bin/echo "M0065 SBUS RC driver already started with PWM ESC start" fi fi if [ "$DISTANCE_SENSOR" == "LIGHTWARE_SF000" ]; then # Make sure to set the parameter SENS_EN_SF0X to 8 for sf000/b sensor qshell lightware_laser_serial start -d 7 fi if [ "$POWER_MANAGER" == "VOXLPM" ]; then # APM power monitor qshell voxlpm start -X -b 2 fi # Optional distance sensor on spare i2c # qshell vl53l0x start -X -b 4 # qshell vl53l1x start -X -b 4 # Start all of the processing modules on DSP qshell sensors start qshell ekf2 start qshell mc_pos_control start qshell mc_att_control start qshell mc_rate_control start qshell mc_hover_thrust_estimator start qshell mc_autotune_attitude_control start qshell land_detector start multicopter qshell manual_control start qshell control_allocator start qshell load_mon start # Only start the rc_update module if an actual RC driver # is publishing input_rc topics. Otherwise for external RC # over Mavlink this isn't needed. if [ "$RC" != "EXTERNAL" ]; then qshell rc_update start fi qshell commander start # This is needed for altitude and position hold modes qshell flight_mode_manager start # Start all of the processing modules on the applications processor dataman start navigator start load_mon start # This bridge allows raw data packets to be sent over UART to the ESC modal_io_bridge start # Start microdds_client for ros2 offboard messages from agent over localhost microdds_client start -t udp -h 127.0.0.1 -p 8888 -n $PX4_NAMESPACE # On M0052 there is only one IMU. So, PX4 needs to # publish IMU samples externally for VIO to use. if [ $PLATFORM = "M0052" ]; then imu_server start fi # start the onboard fast link to connect to voxl-mavlink-server mavlink start -x -u 14556 -o 14557 -r 100000 -n lo -m onboard # slow down some of the fastest streams mavlink stream -u 14556 -s HIGHRES_IMU -r 10 mavlink stream -u 14556 -s ATTITUDE -r 10 mavlink stream -u 14556 -s ATTITUDE_QUATERNION -r 10 mavlink stream -u 14556 -s GLOBAL_POSITION_INT -r 30 mavlink stream -u 14556 -s SCALED_PRESSURE -r 10 # start the slow normal mode for voxl-mavlink-server to forward to GCS mavlink start -x -u 14558 -o 14559 -r 100000 -n lo mavlink boot_complete # Optional MSP OSD driver for DJI goggles # This is only supported on M0054 (with M0125 accessory board) if [ "$OSD" == "ENABLE" ]; then /bin/echo "Starting OSD driver" msp_osd start -d /dev/ttyHS1 fi # Start optional EXTRA_STEPS for i in "${EXTRA_STEPS[@]}" do $i doneThe errors that I get:

-

RE: VOXL2 microdds agent namespaceposted in Ask your questions right here!

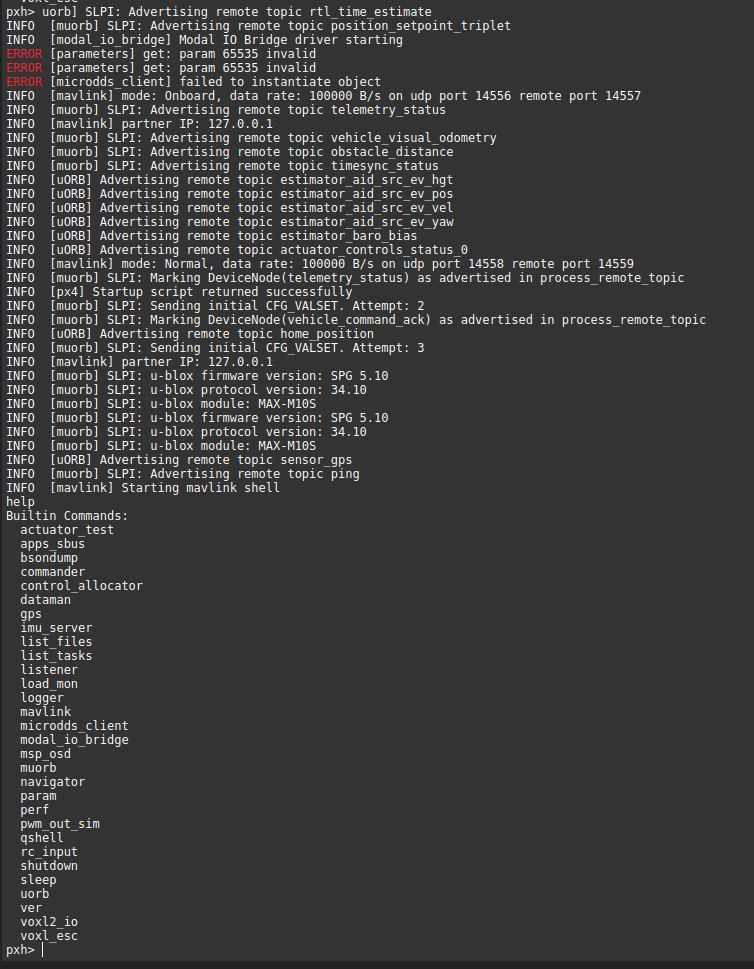

@Moderator THe only way to change microdds namespace is through QGC I tried that and it worked, but the issue is I need to manually type it up on each bootup of the FMU. As shown below:

Microdds service stopped and started with a namespace

Topics remapped on VOXL2

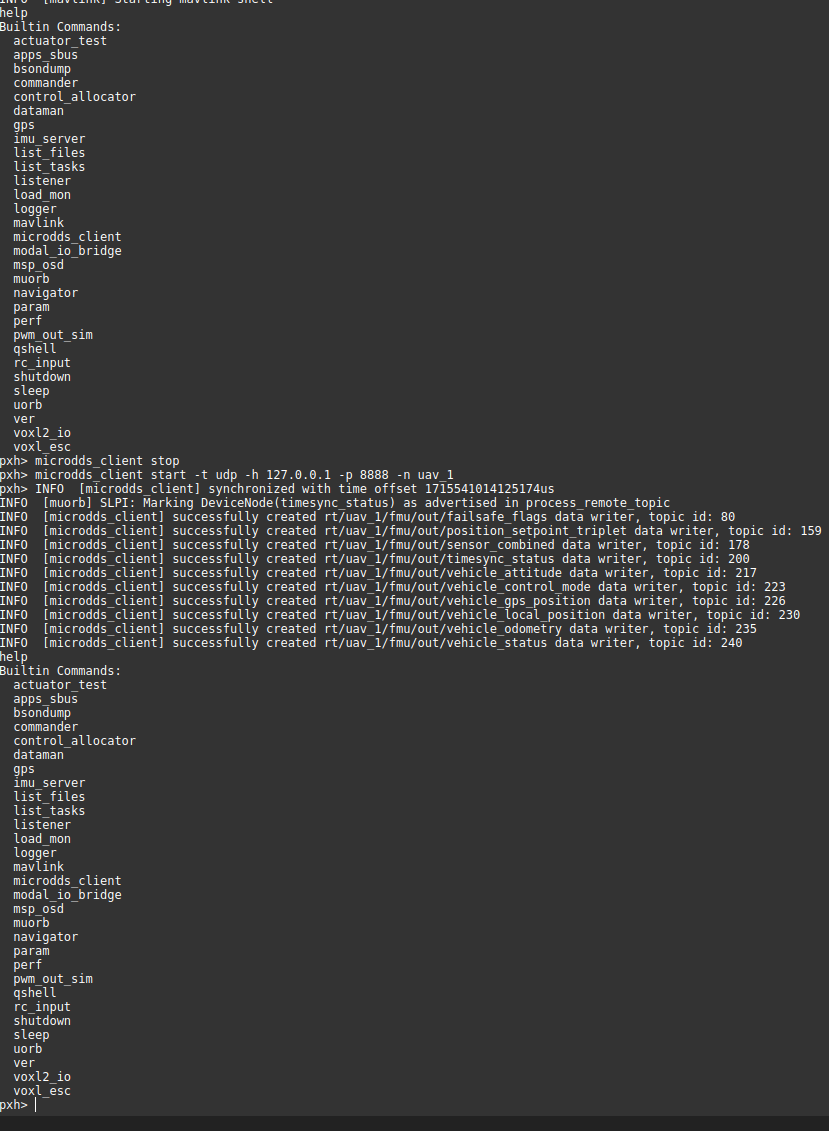

So I thought I could change the voxl-px4.conf to include this as a qshell command but that also didn't work as shown below

voxl-px4.conf file v1

voxl-px4.conf file v2

Both versions resulted in the Error in the restart/power cycle of systemd service

-

RE: VOXL2 microdds agent namespaceposted in Ask your questions right here!

@Moderator Actually this is related to MicroUXRCE Agent for streaming PX4 FMU uORB topics as ROS2 topics, there is a way to rename the namespace for that but in MODAL AI I am not sure. MPATOROS2 is perfectly fine, I can just change the namespace using this command and that is not the issue here:

ros2 run voxl_mpa_to_ros2 voxl_mpa_to_ros2_node --ros-args -r __ns:=/drone3. I am talking about when there is no mpatoros2 node running and the Microdds agent streams the PX4 uorb topics as shown below: