Seeking Reference Code for MPA Integration with RTSP Video Streams for TFLite Server

-

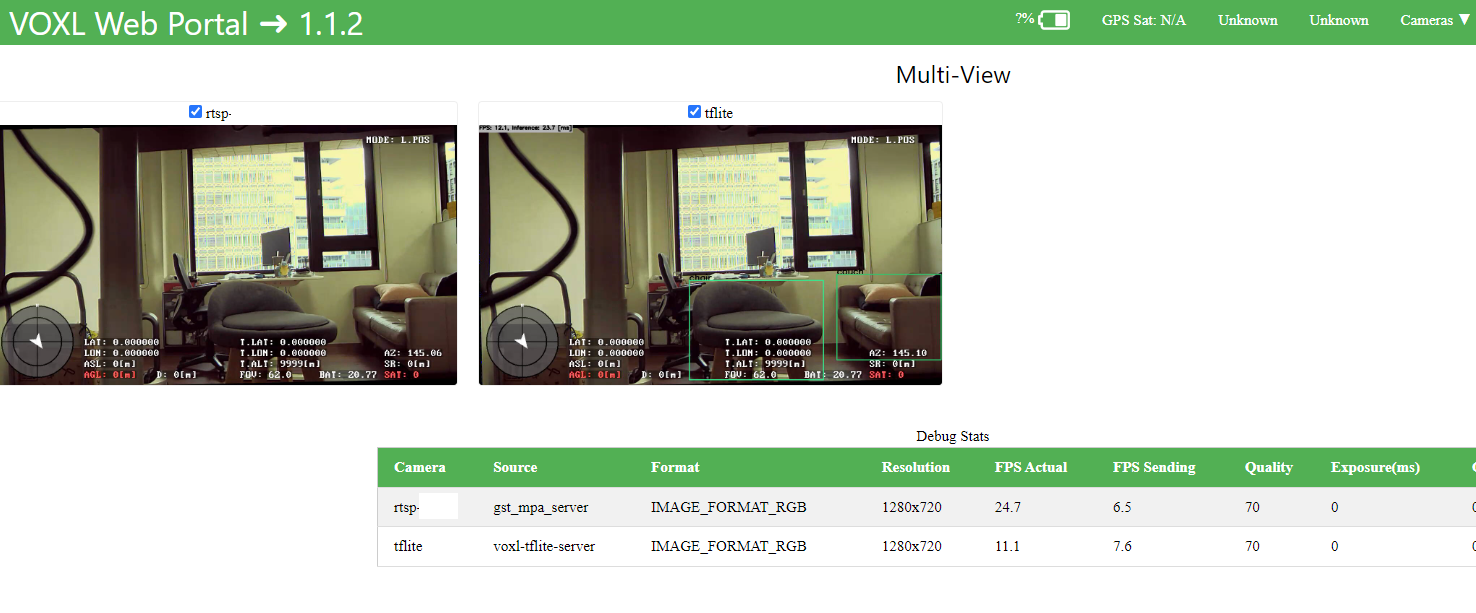

For the original topic, integrate RTSP stream for tflite server.

I found that the problem is the sample code u provide, in metadata, format is RGB.

However, in tflite, https://gitlab.com/voxl-public/voxl-sdk/services/voxl-tflite-server/-/blob/master/src/inference_helper.cpp?ref_type=heads#L305 it only handle NV12 NV21 YUV422 RAW8.

Thus, i need to make the RTSP stream NV12, than tflie can take the rest of the job.

! ... ! ... ! videoconvert ! video/x-raw,format=NV12 ! appsinkAre there proper way to handle such format problem? Because NV12 got into tflite, it still need to resize to RGB format.

Thanks!

-

This post is deleted! -

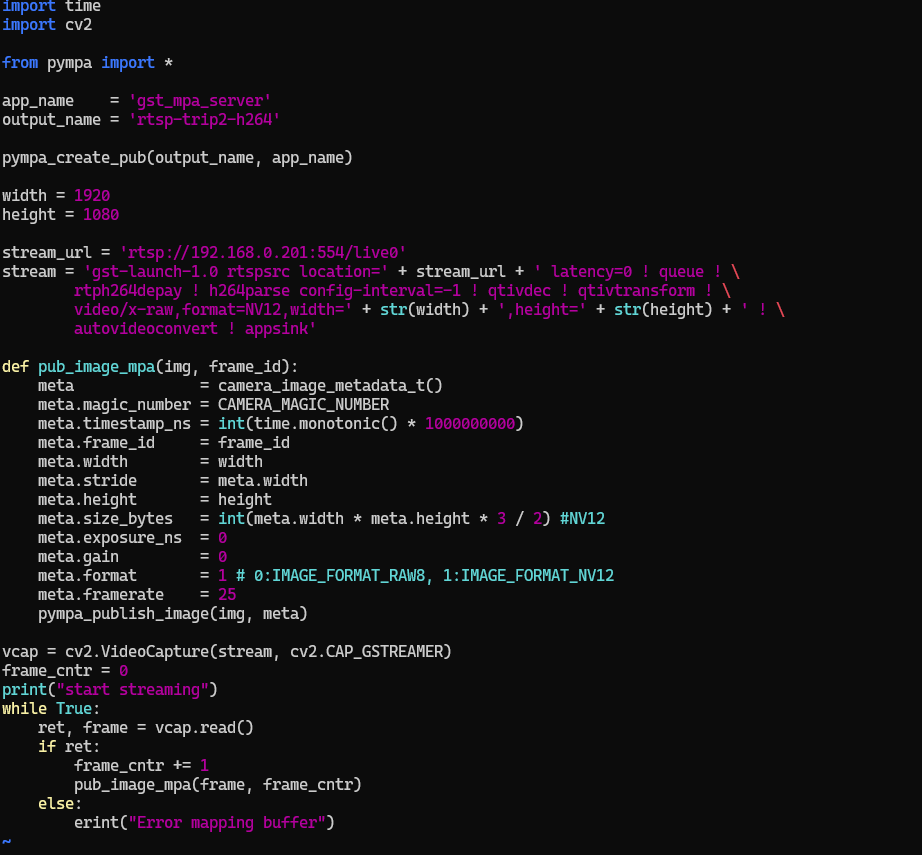

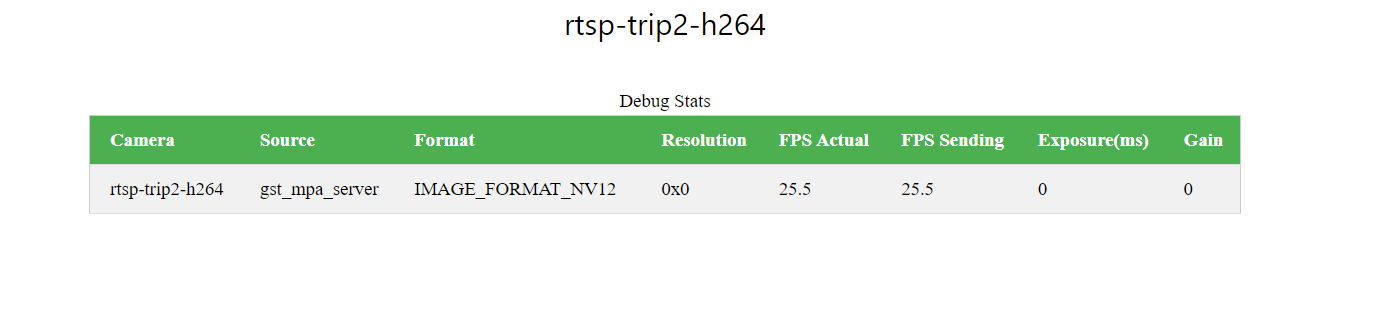

Hi, I try to use HW based decoder with HW resize.

I also try SW decoding, cv2.VideoCapture(stream,cv2.CAP_GSTREAMER) doesn't workout.

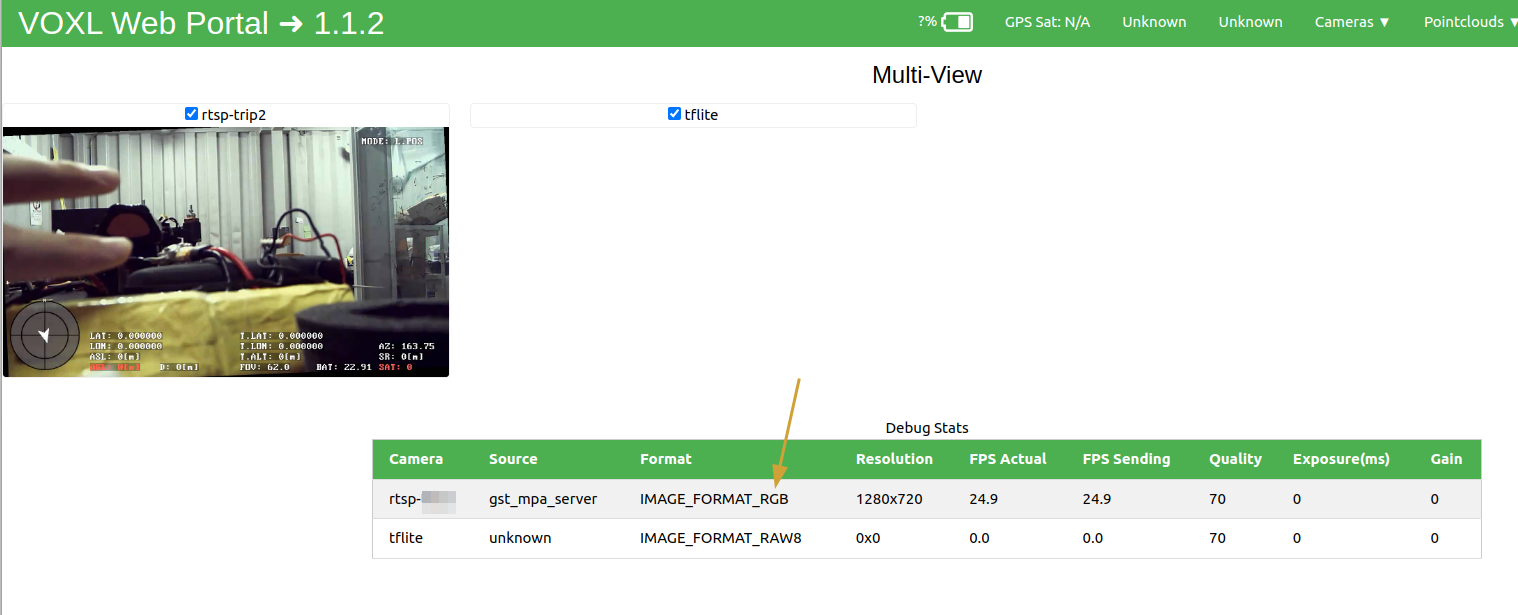

But in protal, it cannot show anything.

The following is using gi to launch gstreamer and upscale 720 to 1080.

-

@anghung , I believe the issue is with telling

appsinkthe correct format that you need.Looking at opencv source code (https://github.com/opencv/opencv/blob/4.x/modules/videoio/src/cap_gstreamer.cpp#L1000), you can see the supported formats.

Please try inserting

! video/x-raw, format=NV12 !betweenautovideoconvertandappsink.Let me know if that works!

Edit: it seems you may have already tried it, if so, did it work? I could not understand the exact problem - do you need both NV12 and RGB?

Alex

-

The code in voxl-tflite-server inference-helper, it only handle NV12 YUV422 NV21 and RAW8.

But when rtsp got in to opencv frame, original it is BGR format, than I use video/x-raw,format=NV12 videoconvert. So the tflite-server can handle the mpa.

However in tflite-server, the NV12 will be convert to RGB.

Is there something that can make this process more efficient?

Thanks!

-

@anghung, if you would like to try, you can add a handler for RGB type image here : https://gitlab.com/voxl-public/voxl-sdk/services/voxl-tflite-server/-/blob/master/src/inference_helper.cpp?ref_type=heads#L282 and publish RGB from the python script. It should be simple enough to try it, based on other examples of using YUV and RAW8 images in the same file.

However, then you will be sending more data via MPA (RGB is 3 bytes per pixel, YUV is 1.5 bytes per pixel). Sending more data via MPA should be more efficient than doing unnecessary conversions between YUV and RGB. So, if you can get the video capture to return RGB (not BGR), you could publish the RGB from the python script and use it directly with modified

inference_helper.cpp.Alex

-

Okay, I would try that.

Also I'm thinking about implement rtsp to mpa in cpp.

Is there some guidance?

Thanks!

-

@anghung , you want to implement rtsp directly in cpp, you don't need mpa. You can still use opencv to receive the rtsp stream . You should be able to use

cv::VideoCaptureclass to get the rtsp frames decoded into your cpp application, just like you did in the python example. Then, you can use opencv to change the format of the image, if needed, or just request the correct format using the gstreamer pipeline when you create theVideoCaptureinstance. Finally, use the resulting image for processing.You can start by modifying the

voxl-tflite-serverto subscribe to the rtsp stream (as an option, instead of mpa) or just make your new application based onvoxl-tflite-server. This would be a nice feature, if you get it working and would like to contribute it back

Alex

-

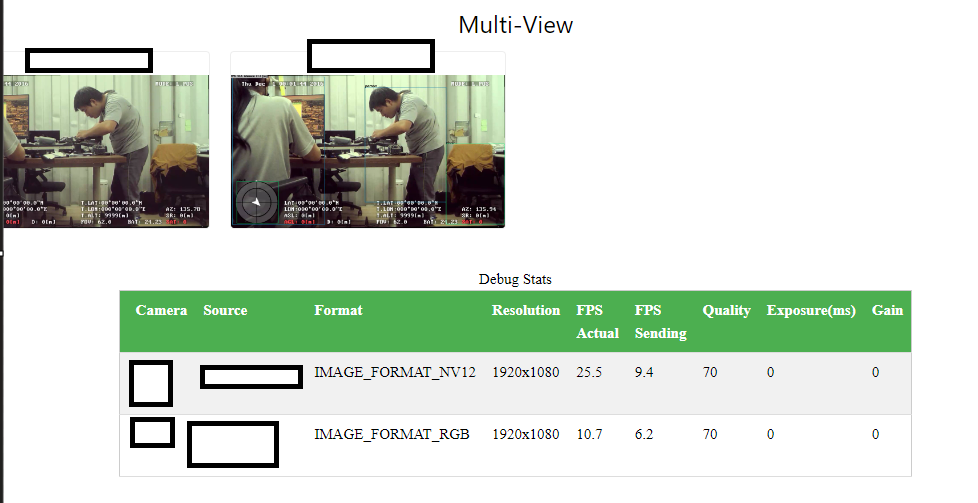

Of course, I have been use

cv::VideoCapturein my laptop for testing.Because I am on a business trip, so I asked a colleague to make modifications in

voxl-tflite-serverand conduct some tests. However, the results did not match our expectations.As you mention, this function https://gitlab.com/voxl-public/voxl-sdk/services/voxl-tflite-server/-/blob/master/src/inference_helper.cpp?ref_type=heads#L282 should be able to handle RGB format directly. So we just add another condition in

switchto prevent the functionreturn false.

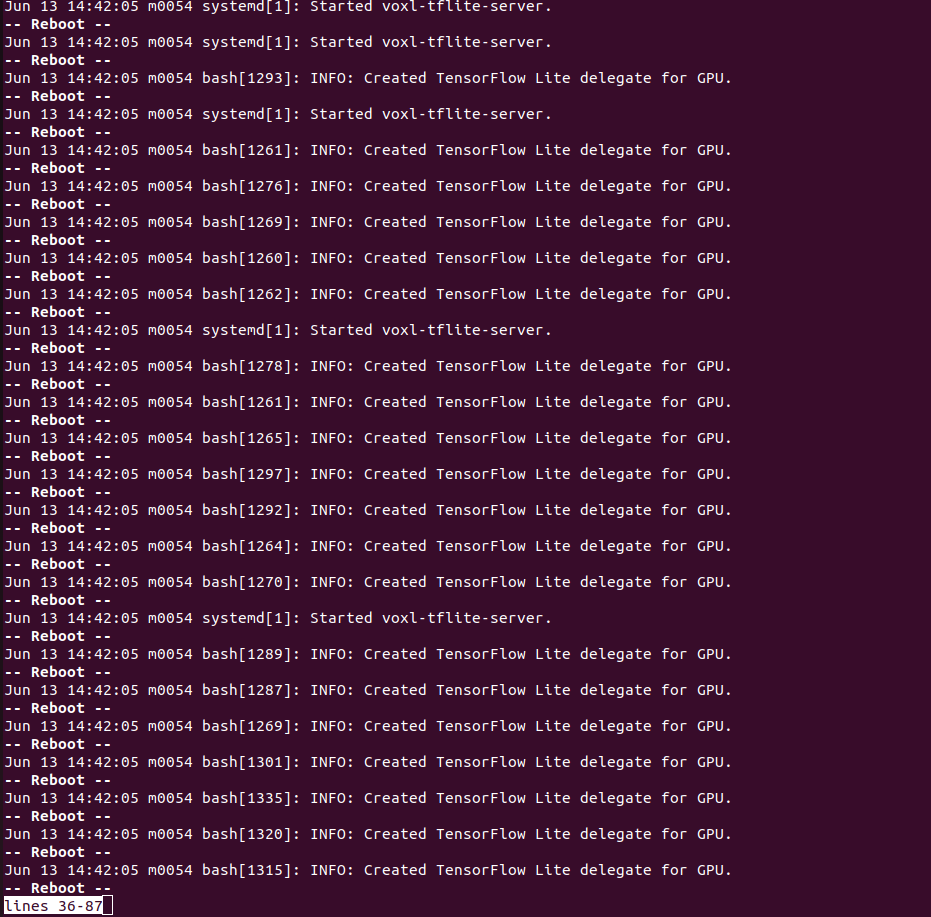

We send RGB format to MPA and the video is fine in Portal, but tflite cant handle it properly. The journal message show that the tflite service restart repearly.

The new

voxl-tflite-serverwe built can handle NV12 correctly as the old one, regarding this situation, what methods should be used to provide more detailed information?We are happy to contribute our successfully tested code. However, we have a limited number of VOXL2 boards available for development, as some are currently in the R&A process. We have already planned to purchase more VOXL2 boards to meet our development needs.

Thanks.

-

-

R ravi referenced this topic on