Improving VIO accuracy

-

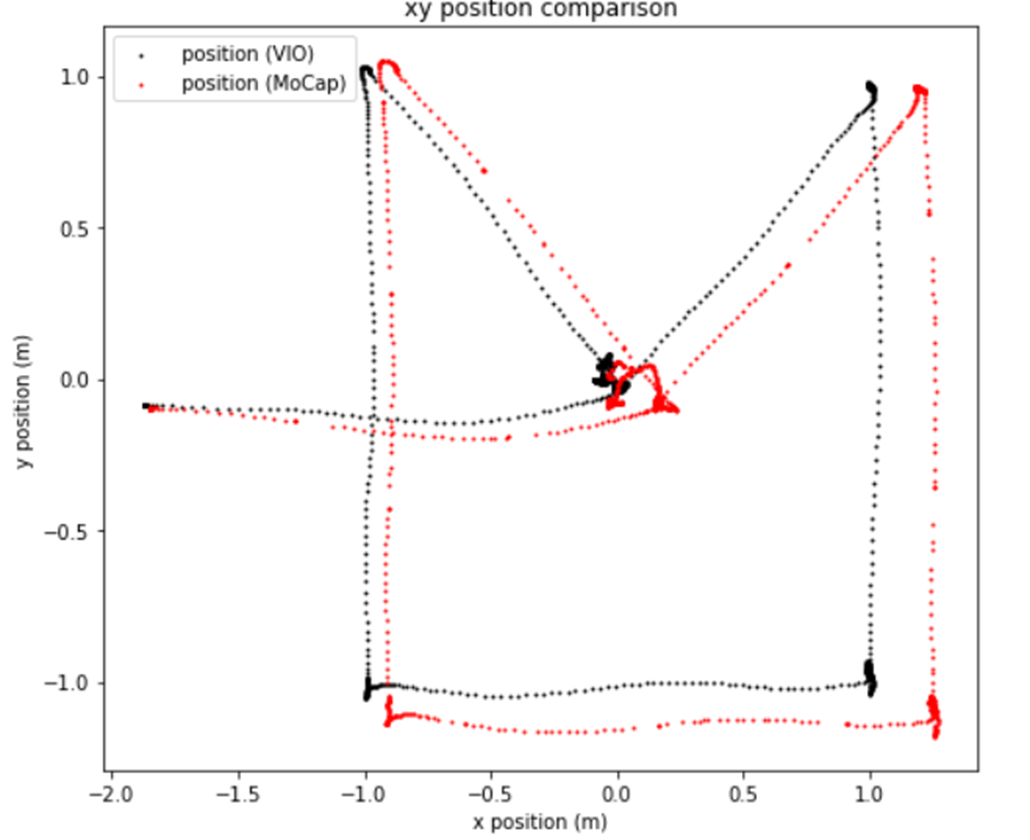

I've been working with the RB5 drone for autonomous flight and computer vision applications. I recently did a basic test to quantify the accuracy of VIO. In this test, I had the drone fly to positions (0,0,0), (1,1,1), (1,-1,1), (-1,-1,1), (-1,1,1) and back to (0,0,0), from its starting location. These are in global coordinates established by a motion capture system.

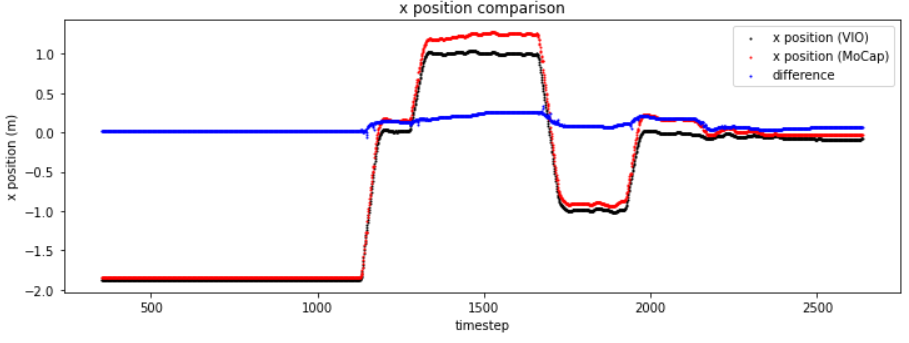

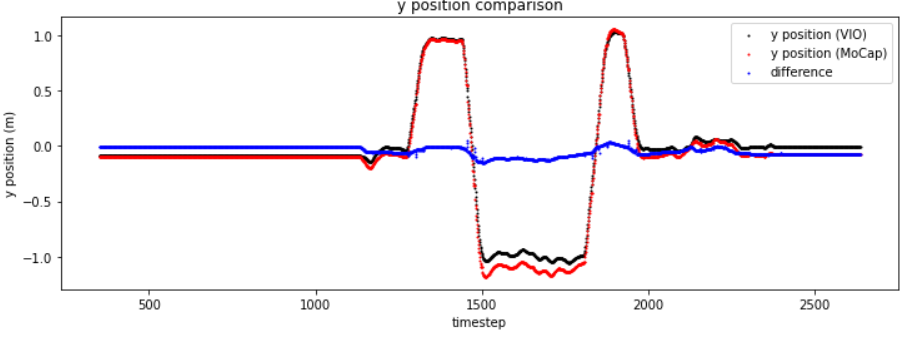

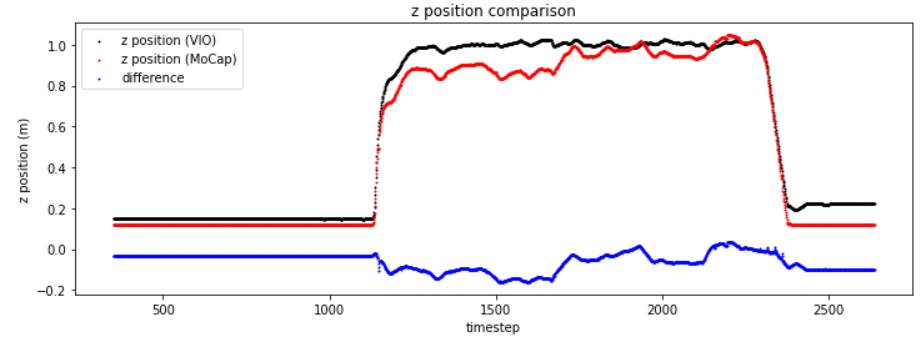

When powered up, I obtained the homogenous transformation from initial motion capture estimate. This homogenous transformation was then applied to the drone's VIO pose estimate. The drone compared this VIO pose estimate with my desired set point position inputs for flight. Motion capture was used to estimate ground truth position. I plotted the trajectories below. In each plot, black is VIO pose estimate, red is motion capture pose estimate and blue is the difference between them.

Overall, I can see that the VIO is working and it won't be perfect but I'd like explore ways to improve its accuracy. I am also certain this is not a PID control error either, as we can see the estimated (VIO) pose converging onto the set point inputs I provide; the drone clearly thinks it is in the right position, when it is not, as given from the motion capture pose estimate.

Some options I'm considering:

- changing PX4 parameters associated with VIO

- building a ROS node(s) to improve the pose estimate. I am only using the ROS topic /mavros/local_position/pose. Maybe I can use more information such as from /mavros/local_position/velocity_body and fuse them together for example.

-

Hi,

This is all down to the VIO algorithm. You'd be able to make major tweaks if you're postprocessing the data after, like detecting a reset and continuing from the last valid position (we do this in vvpx4), but changing the px4 params or other fusion techniques that only use the same sensors as the initial vio algorithm will likely not yield any improvements.

As for improving the vio algorithm itself, the QVIO's mvVISLAM package is proprietary code(even to us, we don't have the source) that we usually quote at 2% error over distance, which seems to match the test you've run here (you've flown a bit under 10 meters and it looks like you've drifted ~0.15-0.2 meters. We've been working on and experimenting with other algorithms internally, but don't have a hard date on when these will be fully tested/published. It's worth mentioning that most of our experimentation with these is to attempt to open-source our solution and add support for other features (like multiple cameras) but that generally the 2% error is considered very good especially in real-world environments.

The 2 main things you can do out of the box to ensure that your VIO is working as best it can are ensuring that it has accurate camera and imu calibrations, and that your extrinsics file is accurate.